Commander Data Painted, Played Music, Created a Daughter—and Went on Trial Over His Right to Exist — So Why Does Generated AI feel so Different...?

Data creating art and music in Star Trek: The Next Generation is interesting: non-human creativity isn’t automatically disturbing. The discomfort around modern AI points somewhere else.

Note:

This post is a philosophical thought experiment about creativity, authorship, and ethics. It isn’t an endorsement of AI-generated art or music, and it isn’t arguing that AI tools should or shouldn’t be used.

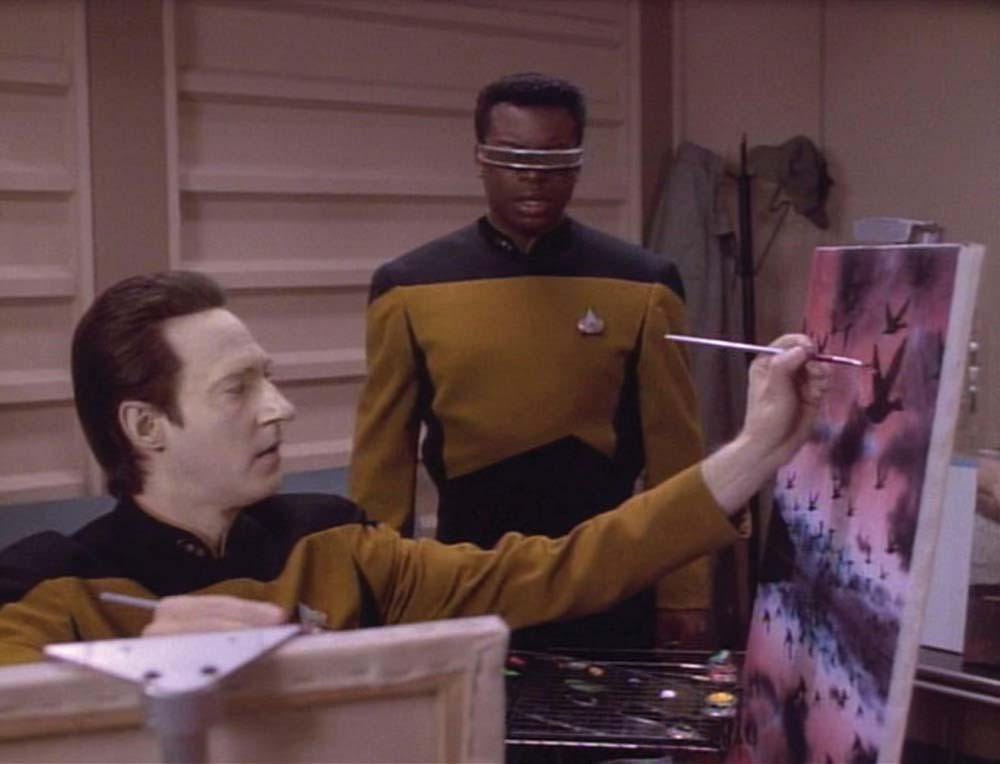

The following YouTube video is about the painting of Commander Data’s daughter, created by using himself as a model.

It’s somewhat easy to imagine that many viewers found it intriguing—even moving—that Commander Data could paint, play music, and even take care of a cat: a non-human reaching toward craft, taste, and self-expression. Yet that reaction can sit beside real backlash toward generative AI, which carries a different kind of cultural weight than a fictional android with a paintbrush and a bow to a violin.

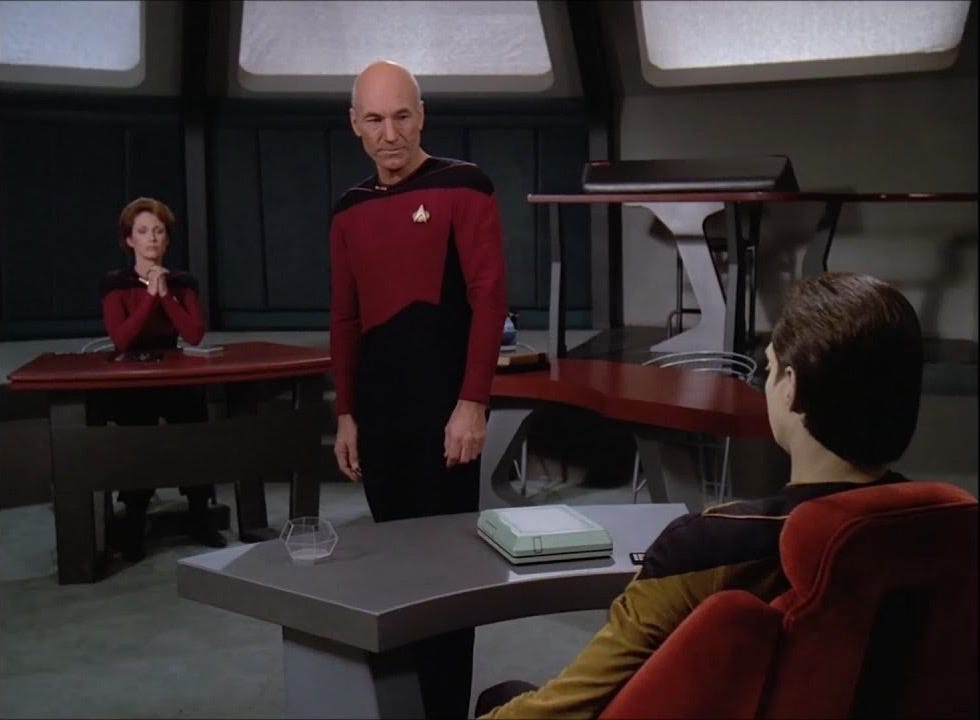

Data is an interesting example because Star Trek: The Next Generation repeatedly frames him as more than a machine that produces outputs. He paints. He plays music. He creates a child. He refuses orders. He ends up in a courtroom where the central question isn’t “how impressive is he?” but what is he—property or person?

Creativity as output vs creativity as agency

A painting or a song can be judged on aesthetics. But when the creator is also the subject of control, creativity becomes a political question:

Who is allowed to create, and who is allowed to say no?

Data’s art and music aren’t interesting because they prove a machine can imitate humans. They’re interesting because they signal intent: practice, taste, choice, and the desire to become something through craft.

The Offspring: Creation turns into responsibility

In The Offspring, Data builds Lal—and suddenly creation has stakes.

A child is not a product demo. A child is a future that can be harmed.

When an institution wants to take that child away for its own purposes, the conflict becomes stark:

Is creation something you’re permitted to do only as long as it remains useful to the institution?

Data’s refusal isn’t a technical malfunction. It’s a moral boundary.

The Trial: Progress vs Consent

In The Measure of a Man, Data is pressured into a legal fight over whether he has the right to refuse being dismantled so he can be studied and replicated.

The rhetoric is familiar: it’s for the greater good; it could advance knowledge; it could benefit many.

The episode exposes a principle that technology debates often dodge:

Utility doesn’t automatically grant permission.

A thing can be valuable and still be wrong to take.

What this may reframe about AI creativity

The surface debate around AI art and AI music often gets stuck on whether the results are “real” or “soulless.” Data’s storyline suggests a different axis:

The moral temperature rises when creativity becomes industrial

Not “one being making art,” but systems built to scale creation endlessly.The core conflict is governance

Who sets the rules, who opts in, who opts out, who is compensated, who has recourse.The pressure point is extraction

What gets taken, from whom, under what authority, for whose gain.

In other words, the uneasy feeling isn’t necessarily triggered by the existence of machine-made beauty. It’s triggered by the possibility that creativity—human or synthetic—can be converted into a resource to be seized, standardized, and deployed.

Spot, or the Difference Between Output and Relationship

Data’s cat, Spot, adds a quieter layer to the question of synthetic creativity. Caring for an animal isn’t a performance metric. It’s routine, attention, patience, and responsibility—things that can’t be reduced to impressive results. It reframes Data as someone who doesn’t just produce (art, music, inventions), but who maintains a bond.

That’s a useful contrast for modern AI debates: an image generator can produce endless artifacts, but it doesn’t shoulder ongoing duty toward anyone affected by those artifacts. Spot highlights the missing ingredient people often reach for without naming it—not skill, but stewardship.

Even Aliens Respect Commander Data for his Intelligence and Strength

In The Chase, a Klingon commander doesn’t approach Data as a curiosity or a “machine trying to be human.” He treats him as someone worth testing—and, after the b’aht Qul challenge, as someone worth respecting even more than before. The respect is earned through demonstrated intelligence, composure, and strength, not through sentiment or familiarity.

That detail matters philosophically: recognition crosses species lines when it’s grounded in capability. Data’s value isn’t confined to human standards of emotion or artistry; even outsiders can see his competence and treat it as real.

It sharpens a modern contrast — Admiration for AI outputs is common, but respect is harder to place—because respect usually implies an agent behind the ability, someone who can be praised, blamed, trusted, or held accountable.

Two questions that keep getting blended

Meaning: Can a non-human produce a painting or a piece of music that feels aesthetically powerful or emotionally resonant?

Ethics: Was the system built and deployed in a way that respects the people whose work shaped what it can produce?

It appears that a lot of heat comes from treating (2) as if it’s (1).

“Humans learn from other artists” is true—and also incomplete

Human inspiration tends to involve:

limited exposure over time

slow practice and personal taste

imperfect memory and transformation

visible lineage (influences can be named)

accountability (reputation and norms matter)

Modern generative systems introduce different properties:

scale (training across enormous corpora)

opacity (influences are difficult to trace)

automation (style and genre become prompts)

commercial substitution (outputs can replace paid work)

These are pipeline questions, not “is it beautiful?” questions.

A Commander Data thought experiment

Data the creator: studies, practices, makes choices, develops taste—paintings and music as a craft.

Data the product: assembled by copying artists’ portfolios and musicians’ catalogs without permission, then marketed as “make it in anyone’s style, instantly.”

The second version turns creativity into a product built on access that was never granted.

Three Pressure-Test Questions

When does influence become appropriation?

One line is crossed when value is taken without relationship: no consent, no credit, no compensation, no recourse.What happens to authorship when style becomes a setting?

If “in the style of X” becomes normal, creative identity shifts from voice to parameter.Is the unit of harm similarity or substitution?

An output doesn’t need to be a replica to function like a replacement.

A Reframing

This isn’t a fight over whether machines can create.

It’s a dispute over provenance, power, and payment: who supplied the cultural material, who controls the tools, and who absorbs the downside.

Commander Data’s creativity reads like a personal practice.

Modern AI creativity often reads like an industrial capability.

That gap—does not have a “soul”—is where the difference lives.

But does Commander Data have a soul or not…..

Conclusion: So, Why Does Generated AI Feel So Different?

Commander Data’s painting and music feel like the byproduct of a life—practice, taste, restraint, and choice. His creativity reads as self-directed. When he creates Lal, it stops being “output” and becomes responsibility. When he refuses Starfleet’s pressure, it becomes autonomy. When he faces a courtroom in The Measure of a Man, it becomes rights. Even when a Klingon tests him in The Chase, the respect he earns is tied to something deeper than capability: the sense that there is an agent behind the ability.

Generated AI often feels different because it arrives without that arc. It presents capability without personhood, production without stewardship, and scale without a clear moral center. It can generate endlessly, but it cannot meaningfully consent, refuse, take responsibility, or be accountable—those burdens land on the humans and institutions operating it. And without a shared sense of who is answerable, the technology doesn’t feel like a character’s personal pursuit; it feels like a system.

That’s the philosophical gap: Data’s creativity is framed as a life trying to mean something. Generated AI creativity is framed as a tool trying to do more.